Written by Harry Roberts on CSS Wizardry.

Desk of Contents

One of many extra basic guidelines of constructing quick web sites is to optimise your

belongings, and the place textual content content material resembling HTML, CSS, and JS are involved, we’re

speaking about compression.

The de facto text-compression of the online is Gzip, with round 80% of compressed

responses favouring that algorithm, and the remaining 20% use the a lot newer

Brotli.

After all, this complete of 100% solely measures compressible responses that

really have been compressed—there are nonetheless many tens of millions of sources that might

or ought to have been compressed however weren’t. For a extra detailed breakdown of

the numbers, see the

Compression part of

the Net Almanac.

Gzip is tremendously efficient. The complete works of Shakespeare weigh in at

5.3MB in plain-text format; after Gzip (compression stage 6), that quantity comes

right down to 1.9MB. That’s a 2.8× lower in file-size with zero lack of information. Good!

Even higher for us, Gzip favours repetition—the extra repeated strings present in

a textual content file, the simpler Gzip may be. This spells nice information for the online,

the place HTML, CSS, and JS have a really constant and repetitive syntax.

However, whereas Gzip is very efficient, it’s outdated; it was launched in 1992 (which

actually helps clarify its ubiquity). 21 years later, in 2013, Google launched

Brotli, a brand new algorithm that claims even higher enchancment than Gzip! That

similar 5.2MB Shakespeare compilation comes right down to 1.7MB when compressed with

Brotli (compression stage 6), giving a 3.1× lower in file-size. Nicer!

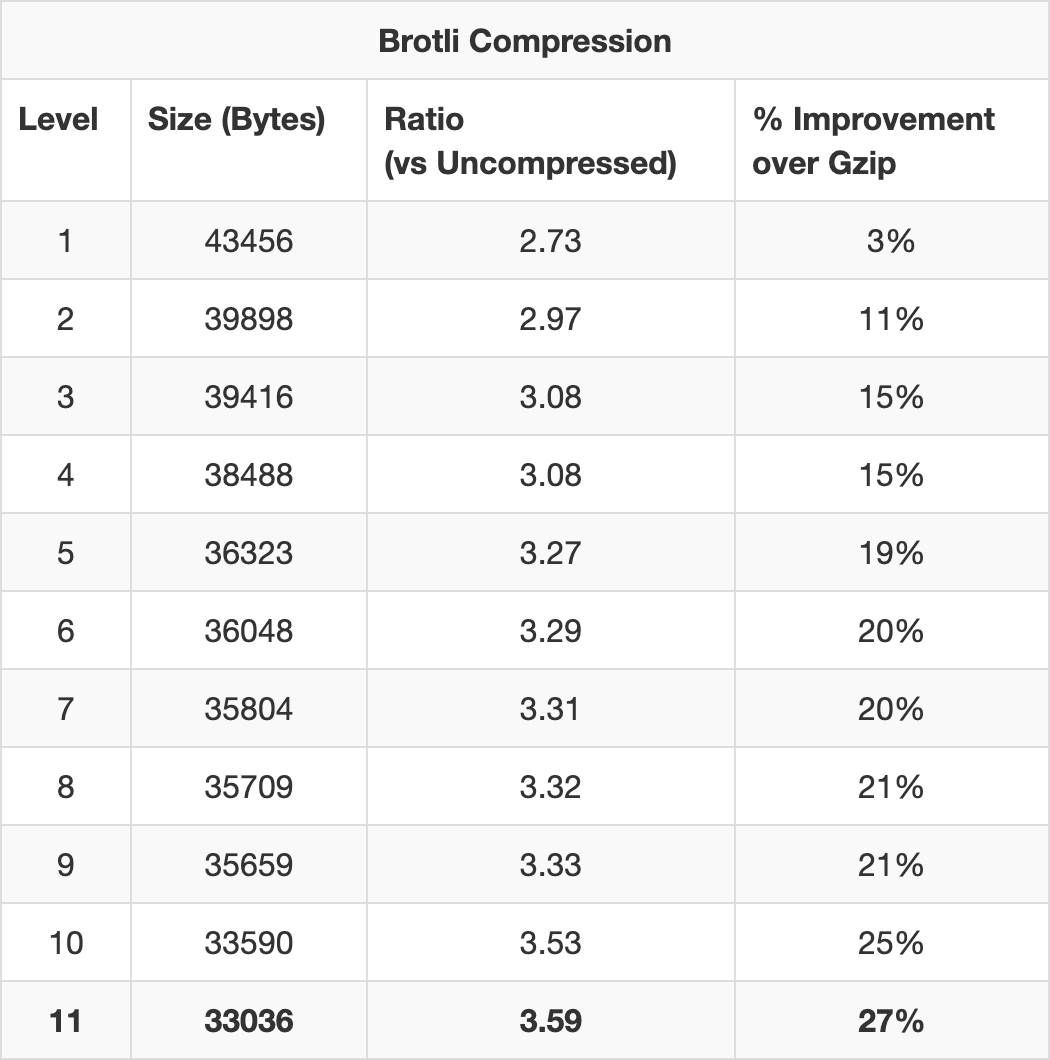

Utilizing Paul Calvano’s Gzip and Brotli Compression Stage

Estimator!, you’re prone to

discover that sure recordsdata can earn staggering financial savings by utilizing Brotli over Gzip.

ReactDOM, for instance, finally ends up 27% smaller when compressed with maximum-level

Brotli compression (11) versus with maximum-level Gzip (9).

compressing ReactDom. At Brotli’s most setting, it’s 27% simpler than

Gzip.

And talking purely anecdotally, shifting a shopper of mine from Gzip to Brotli led

to a median file-size saving of 31%.

So, for the final a number of years, I, together with different efficiency engineers like

me, have been recommending that our shoppers transfer over from Gzip and to Brotli

as an alternative.

Browser Assist: A short interlude. Whereas Gzip is so broadly

supported that Can I Use doesn’t even checklist tables for it (This HTTP header is

), Brotli at the moment enjoys 93.17% worldwide

supported in successfully all browsers (since IE6+, Firefox 2+, Chrome 1+

and many others)

assist on the time of writing, which is

big! That mentioned, if you happen to’re a web site of any affordable measurement, serving uncompressed

sources to over 6% of your prospects won’t sit too nicely with you. Nicely,

you’re in luck. The way in which shoppers promote their assist for a selected

algorithm works in a totally progressive method, so customers who can’t settle for

Brotli will merely fall again to Gzip. Extra on this later.

For essentially the most half, significantly if you happen to’re utilizing a CDN, enabling Brotli ought to

simply be the flick of a change. It’s actually that easy in Cloudflare, who

I run CSS Wizardry by. Nevertheless, plenty of shoppers of mine prior to now

couple of years haven’t been fairly so fortunate. They have been both operating their very own

infrastructure and putting in and deploying Brotli in all places proved

non-trivial, or they have been utilizing a CDN who didn’t have available assist

for the brand new algorithm.

In cases the place we have been unable to allow Brotli, we have been all the time left

questioning What if…

So, lastly, I’ve determined to aim to quantify the

query: how essential is it that we transfer over to Brotli?

Smaller Doesn’t Essentially Imply Quicker

Often, positive! Making a file smaller will make it arrive sooner, typically

talking. However making a file, say, 20% smaller is not going to make it arrive 20%

earlier. It is because file-size is just one side of net efficiency, and

regardless of the file-size is, the useful resource remains to be sat on prime of quite a lot of different

components and constants—latency, packet loss, and many others. Put one other means, file-size

financial savings assist you to cram information into decrease bandwidth, however if you happen to’re latency-bound,

the pace at which these admittedly fewer chunks of information arrive is not going to change.

TCP, Packets, and Spherical Journeys

Taking a really reductive and simplistic view of how recordsdata are transmitted from

server to shopper, we have to have a look at TCP. After we obtain a file from a sever,

we don’t get the entire file in a single go. TCP, upon which HTTP sits, breaks the

file up into segments, or packets. These packets are despatched, in batches, in

order, to the shopper. They’re every acknowledged earlier than the following collection of

packets is transferred till the shopper has all of them, none are left on the

server, and the shopper can reassemble them into what we would recognise as

a file. Every batch of packets will get despatched in a spherical journey.

Every new TCP connection has no means of understanding what bandwidth it at the moment has

accessible to it, nor how dependable the connection is (i.e. packet loss). If the

server tried to ship a megabyte’s price of packets over a connection that solely

has capability for one megabit, it’s going to flood that connection and trigger

congestion. Conversely, if it was to attempt to ship one megabit of information over

a connection that had one megabyte accessible to it, it’s not gaining full

utilisation and many capability goes to waste.

To sort out this little conundrum, TCP utilises a mechanism generally known as gradual begin.

Every new TCP connection limits itself to sending simply 10 packets of information in its

first spherical journey. Ten TCP segments equates to roughly 14KB of information. If these ten

segments arrive efficiently, the following spherical journey will carry 20 packets, then

40, 80, 160, and so forth. This exponential progress continues till one among two

issues occurs:

- we endure packet loss, at which level the server will halve regardless of the final

variety of tried packets have been and retry, or; - we max out our bandwidth and might run at full capability.

This easy, elegant technique manages to steadiness warning with optimism, and

applies to each new TCP connection that your net utility makes.

Put merely: your preliminary bandwidth capability on a brand new TCP connection is just

about 14KB. Or roughly 11.8% of uncompressed ReactDom; 36.94% of Gzipped

ReactDom; or 42.38% of Brotlied ReactDom (each set to most compression).

Wait. The leap from 11.8% to 36.94% is fairly notable! However the change from

36.94% to 42.38% is way much less spectacular. What’s occurring?

| Spherical Journeys | TCP Capability (KB) | Cumulative Switch (KB) | ReactDom Transferred By… |

|---|---|---|---|

| 1 | 14 | 14 | |

| 2 | 28 | 42 | Gzip (37.904KB), Brotli (33.036KB) |

| 3 | 56 | 98 | |

| 4 | 112 | 210 | Uncompressed (118.656KB) |

| 5 | 224 | 434 |

Each the Gzipped and Brotlied variations of ReactDom match into the identical round-trip

bucket: simply two spherical journeys to get the complete file transferred. If all spherical journey

occasions (RTT) are pretty uniform, this implies there’s no distinction in switch

time between Gzip and Brotli right here.

The uncompressed model, then again, takes a full two spherical journeys extra

to be absolutely transferred, which—significantly on a excessive latency connection—may

be fairly noticeable.

The purpose I’m driving at right here is that it’s not nearly file-size, it’s about

TCP, packets, and spherical journeys. We don’t simply need to make recordsdata smaller, we

need to make them meaningfully smaller, nudging them into decrease spherical journey

buckets.

Which means, in idea, for Brotli to be notably simpler than Gzip,

it’s going to want to have the ability to compress recordsdata fairly much more aggressively in order to

transfer it beneath spherical journey thresholds. I’m undecided how nicely it’s going to stack

up…

It’s price noting that this mannequin is sort of aggressively simplified, and

there are myriad extra components to have in mind: is the TCP connection new or

not, is it getting used for the rest, is server-side prioritisation

stop-starting switch, do H/2 streams have unique entry to bandwidth? This

part is a extra tutorial evaluation and must be seen as a superb jump-off

level, however contemplate analysing the info correctly in one thing like Wireshark, and

additionally learn Barry Pollard’s way more forensic

evaluation of the magic 14KB in his Essential Assets and the First 14 KB

– A Evaluation.

This rule additionally solely applies to model new TCP connections, and any recordsdata fetched

over primed TCP connections is not going to be topic to gradual begin. This brings forth

two essential factors:

- Not that it ought to want repeating: you should self-host your static

belongings.

It is a nice technique to keep away from a gradual begin penalty, as utilizing your personal

already-warmed up origin means your packets have entry to higher bandwidth,

which brings me to level two; - With exponential progress, you’ll be able to see simply how shortly we attain comparatively

large bandwidths. The extra we use or reuse connections, the additional we are able to

improve capability till we prime out. Let’s have a look at a continuation of the above

desk…

| Spherical Journeys | TCP Capability (KB) | Cumulative Switch (KB) |

|---|---|---|

| 1 | 14 | 14 |

| 2 | 28 | 42 |

| 3 | 56 | 98 |

| 4 | 112 | 210 |

| 5 | 224 | 434 |

| 6 | 448 | 882 |

| 7 | 896 | 1,778 |

| 8 | 1,792 | 3,570 |

| 9 | 3,584 | 7,154 |

| 10 | 7,168 | 14,322 |

| … | … | … |

| 20 | 7,340,032 | 14,680,050 |

By the tip of 10 spherical journeys, we now have a TCP capability of seven,168KB and have already

transferred a cumulative 14,322KB. That is greater than satisfactory for informal net

shopping (i.e. not torrenting streaming Sport of Thrones). In precise reality,

what often occurs right here is that we find yourself loading the complete net web page and all

of its subresources earlier than we even attain the restrict of our bandwidth. Put one other

means, your 1Gbps fibre line received’t make your day-to-day shopping really feel a lot

sooner as a result of most of it isn’t even getting used.

By 20 spherical journeys, we’re theoretically hitting a capability of seven.34GB.

What Concerning the Actual World?

Okay, yeah. That each one received a bit of theoretical and tutorial. I began off this

complete prepare of thought as a result of I wished to see, realistically, what influence

Brotli may need for actual web sites.

The numbers up to now present that the distinction between no compression and Gzip are

huge, whereas the distinction between Gzip and Brotli are way more modest. This

means that whereas the nothing to Gzip beneficial properties will likely be noticeable, the improve

from Gzip to Brotli would possibly maybe be much less spectacular.

I took a handful of instance websites during which I attempted to cowl websites that have been

a superb cross part of:

- comparatively well-known (it’s higher to make use of demos that folks can

contextualise), and/or; - related and appropriate for the check (i.e. of an inexpensive measurement (compression is

extra related) and never shaped predominantly of non-compressible content material

(like, for instance YouTube)), and/or; - not all multi-billion greenback firms (let’s use some regular case research,

too).

With these necessities in place, I grabbed a choice of

origins and started

testing:

- m.fb.com

- www.linkedin.com

- www.insider.com

- yandex.com

- www.esedirect.co.uk

- www.story.one

- www.cdm-bedrijfskleding.nl

- www.everlane.com

I wished to maintain the check easy, so I grabbed solely:

- information transferred, and;

- First contentful paint (FCP) occasions;

- with out compression;

- with Gzip, and;

- with Brotli.

FCP looks like a real-world and common sufficient metric to use to any web site,

as a result of that’s what individuals are there for—content material. Additionally as a result of Paul

Calvano mentioned so, and he’s good: Brotli

tends to make FCP sooner in my expertise, particularly when the vital CSS/JS

is giant.

Operating the Checks

Right here’s a little bit of a unclean secret. Numerous net efficiency case research—not all,

however quite a bit—aren’t based mostly on enhancements, however are sometimes extrapolated and inferred

from the alternative: slowdowns. For instance, it’s a lot easier for the BBC to say

that they lose an extra 10% of

than it’s to work out

customers for each

extra second it takes for his or her web site to load

what occurs for a 1s speed-up. It’s a lot simpler to make a web site slower, which is

why so many individuals appear to be so good at it.

With that in thoughts, I didn’t need to discover Gzipped websites after which attempt to by some means

Brotli them offline. As an alternative, I took Brotlied web sites and turned off Brotli.

I labored again from Brotli to Gzip, then Gzip to to nothing, and measured the

influence that every choice had.

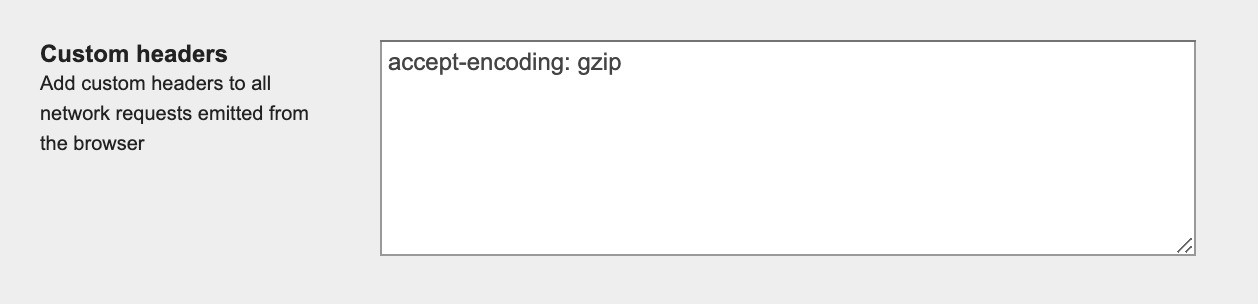

Though I can’t precisely hop onto LinkedIn’s servers and disable Brotli, I can

as an alternative select to request the location from a browser that doesn’t assist Brotli.

And though I can’t precisely disable Brotli in Chrome, I can disguise from the

server the truth that Brotli is supported. The way in which a browser advertises its

accepted compression algorithm(s) is by way of the content-encoding request header,

and utilizing WebPageTest, I can outline my very own. Straightforward!

headers.

- To disable compression completely:

accept-encoding: randomstring. - To disable Brotli however use Gzip as an alternative:

accept-encoding: gzip. - To make use of Brotli if it’s accessible (and the browser helps it): go away clean.

I can then confirm that issues labored as deliberate by checking for the

corresponding (or lack of) content-encoding header within the response.

Findings

As anticipated, going from nothing to Gzip has large reward, however going from Gzip

to Brotli was far much less spectacular. The uncooked information is accessible in this Google

Sheet,

however the issues we primarily care about are:

- Gzip measurement discount vs. nothing: 73% lower

- Gzip FCP enchancment vs. nothing: 23.305% lower

- Brotli measurement discount vs. Gzip: 5.767% lower

- Brotli FCP enchancment vs. Gzip: 3.462% lower

All values are median; ‘Measurement’ refers to HTML, CSS, and JS solely.

Gzip made recordsdata 72% smaller than not compressing them in any respect, however Brotli solely

saved us a further 5.7% over that. When it comes to FCP, Gzip gave us a 23%

enchancment when in comparison with utilizing nothing in any respect, however Brotli solely gained us an

additional 3.5% on prime of that.

Whereas the outcomes to look to again up the speculation, there are a number of methods during which

I may have improved the assessments. The primary could be to make use of a a lot bigger pattern

measurement, and the opposite two I shall define extra absolutely beneath.

First-Social gathering vs. Third-Social gathering

In my assessments, I disabled Brotli throughout the board and never only for the primary get together

origin. Which means I wasn’t measuring solely the goal’s advantages of utilizing

Brotli, however doubtlessly all of their third events as nicely. This solely actually

turns into of curiosity to us if a goal web site has a 3rd get together on their vital

path, however it’s price taking into account.

Compression Ranges

After we speak about compression, we regularly focus on it by way of best-case

situations: level-9 Gzip and level-11 Brotli. Nevertheless, it’s unlikely that your

net server is configured in essentially the most optimum means. Apache’s default Gzip

compression stage is 6, however Nginx is about to only 1.

Disabling Brotli means we fall again to Gzip, and given how I’m testing the

websites, I can’t alter or affect any configurations or fallbacks’

configurations. I point out this as a result of two websites within the check really received bigger

once we enabled Brotli. To me this means that their Gzip compression stage

was set to the next worth than their Brotli stage, making Gzip simpler.

Compression ranges are a trade-off. Ideally you’d wish to set every little thing to the

highest setting and be executed, however that’s not likely sensible—the time taken on

the server to try this dynamically would possible nullify the advantages of

compression within the first place. To fight this, we now have two choices:

- use a practical compression stage that balances pace and effectiveness

when dynamically compressing belongings, or; - add precompressed static belongings with a a lot larger compression stage,

and use the primary choice for dynamic responses.

So What?

It could appear that, realistically, the advantages of Brotli over Gzip are slight.

If enabling Brotli is so simple as flicking a checkbox within the admin panel of

your CDN, please go forward and do it proper now: assist is vast sufficient, fallbacks

are easy, and even minimal enhancements are higher than none in any respect.

The place doable, add precompressed static belongings to your net server with the

highest doable compression setting, and use one thing extra middle-ground for

something dynamic. If you happen to’re operating on Nginx, please make sure you aren’t nonetheless on

their pitiful default compression stage of 1.

Nevertheless, if you happen to’re confronted with the prospect of weeks of engineering, check, and

deployment efforts to get Brotli dwell, don’t panic an excessive amount of—simply be sure to

have Gzip on every little thing that you would be able to compress (that features your .ico and

.ttf recordsdata, in case you have any).

I assume the brief model of the story is that if you happen to haven’t or can’t allow

Brotli, you’re not lacking out on a lot.